As artificial intelligence continues to grow, the world’s data centers use enormous amounts of electricity, noise, heat emissions, and with enormous environmental consequences.

Some large AI facilities already use as much electricity as small cities – raising concerns about both energy costs and environmental impact. – A giga-center that is being built right now in Utah will use more energy than the entire rest of the state and then some.

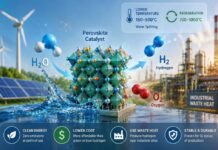

Read: Scientists have created a powerful catalyst that splits water

Therefore, researchers are now looking for new types of computer memory technology that can process and store data with far less energy.

A promising breakthrough

Researchers from POSTECH and Chungnam National University may have made an important breakthrough. They have developed a new memory technology that stores information using small temperature changes instead of large electrical currents – dramatically reducing energy consumption.

Their study was published in the journal Advanced Functional Materials.

The new system is based on a field called spintronics. Traditional electronics store and process information using the electrons’ electrical charge. Spintronics works differently: it uses a property called “spin” – the small magnetic direction of electrons.

In spintronic devices, different spin directions represent the binary numbers 0 and 1 used in digital computing.

Spintronics has gained attention because it can enable faster and more energy-efficient computing. But most existing spintronic memory systems require strong electrical currents to switch spin directions. These currents generate heat and waste enormous amounts of energy.

Read: Why carbon dioxide cools the upper atmosphere – while warming the earth below

Temperature instead of electricity

The research team wanted to avoid this problem by controlling the spin using temperature instead of electricity.

However, previous attempts at temperature-based control faced a major challenge: when the temperature returned to normal, the spin direction often changed back again. That meant that memory couldn’t reliably store information over time.

The researchers solved this with the help of a phenomenon called thermal hysteresis. Simply explained, it means that a system can remain in an altered state even after the original trigger has been removed.

To create this effect, the team built a structure of two magnetic materials: gadolinium-iron garnet and holmium-iron garnet. These materials react differently to temperature changes, creating a magnetic competition between them.

Like a tug of war

The researchers compare the process to a tug of war. Each material pulls the system’s magnetic direction in different directions. When the temperature changes, one side becomes stronger and eventually pulls the system into a new stable state.

Most importantly, the system does not immediately switch back when the temperature changes again. The magnetic state remains stable, allowing the memory to store information without a continuous power supply – this is called non-volatile memory.

The researchers were able to switch memory states with only small temperature changes of about 25 degrees Kelvin, combined with a relatively weak magnetic field.

Up to 66 times lower energy consumption

Compared to today’s spin-orbit torque memory technologies, the new method reduced energy consumption by up to 66 times. Under ideal conditions, the reduction can be as high as 452 times.

Professor Hyungyu Jin states that the work shows that memory devices may one day function using small thermal changes instead of large electrical currents.

While the technology is still in the research phase, it could eventually help develop ultra-low-power memory systems for AI computers, mobile devices, and future data centers – reducing both energy costs and environmental impact.

Of course, none of the centres that are being built now do not use the new technology.